Googleplex is called the headquarters of Google Inc. You can find a few important dates, facts and figures here with us! ... Continue reading

Cloaking is a technique in which a website provides different content for search engines and human visitors. The aim is to deceive the search engines in order to achieve a better ranking in the search results.

The fine line between optimisation and manipulation makes cloaking a fascinating aspect of search engine optimisation.

Cloaking encompasses various methods and practices that aim to deceive search engines. Common methods include:

You can avoid unintentional cloaking with these steps:

Check the robots.txt file: Make sure that your robots .txt file is configured correctly. This file gives instructions to search engine crawlers as to which pages or content may be crawled and which may not. Incorrect settings can lead to cloaking.

Consistent content: Make sure that the content that search engine crawlers see matches the content that human visitors see. Avoid delivering different versions of the same page.

User agent check: If you provide different content for different devices or browsers, use the correct user agent checks. Make sure you don’t accidentally deliver the wrong content.

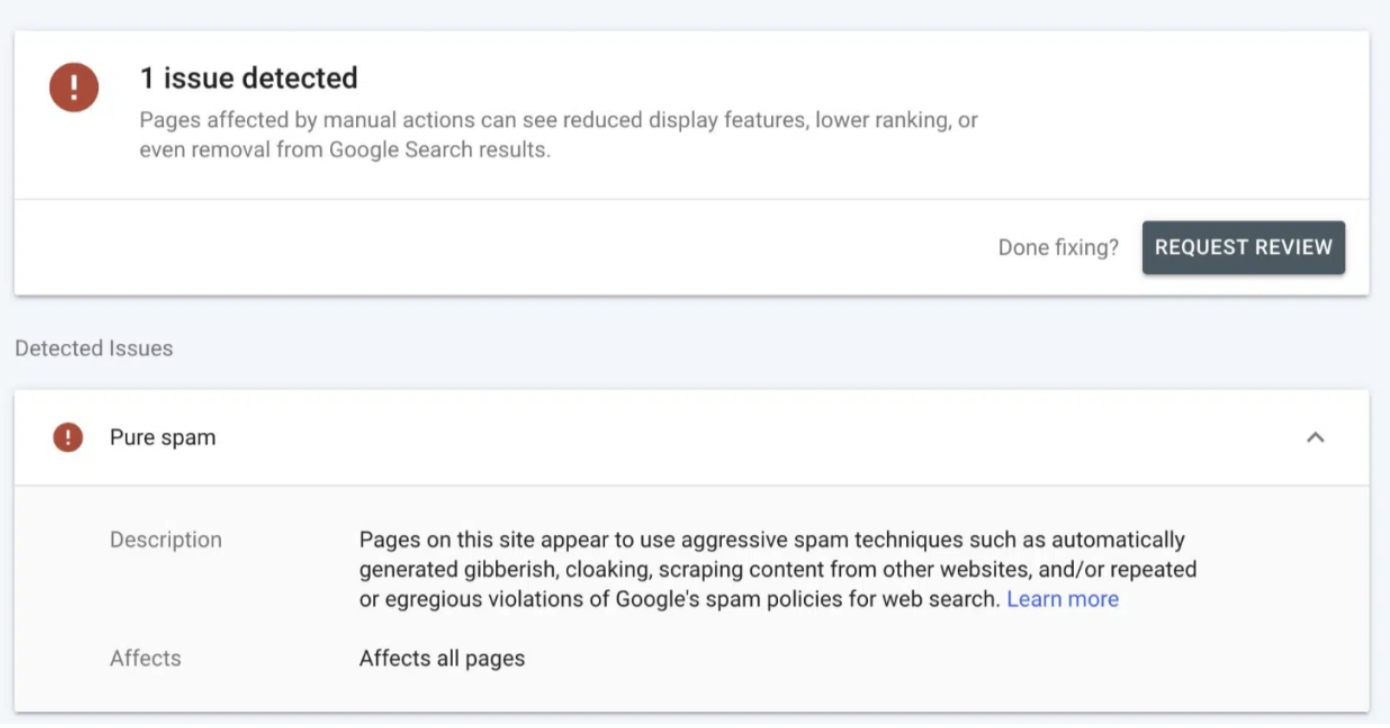

Testing and monitoring: Regular testing and monitoring of your website is important. Use tools like Google Search Console to detect and fix potential cloaking issues. This is what it might look like in your GSC if cloaking is suspected:

Transparent practices: If you use personalised content, ensure transparency for users and inform them about the reasons for personalisation. There should of course be an option to customise this.

This method may seem tempting at first glance, but the risks often outweigh the potential benefits. Website operators should rely on transparent and ethical SEO practices to achieve long-term success.

You want to learn more about exciting topics?